Introduction

Autonomous AI agents are AI-driven software entities that can make independent decisions and perform tasks on behalf of a user or organization. A natural evolution of such agents is enabling them to handle financial transactions – essentially making payments on behalf of users in various contexts (B2C purchases, B2B payments, internal enterprise expenses). This could mean a personal AI assistant paying bills or buying products for a consumer, an enterprise agent automating procurement payments, or even AI-to-AI transactions in supply chains. Enabling agents with spending power promises increased efficiency and 24/7 autonomy. However, it also introduces significant new considerations, as the existing payments infrastructure and rules have been built around human actors. In fact, dozens of startups and projects are now exploring “agentic payments,” recognizing that today’s financial systems were “explicitly designed for humans, not bots,” and need adaptation to support autonomous agents. This report examines the core technical, legal, and ethical challenges of agent-initiated payments, surveys emerging tools and mechanisms (from virtual credit cards to crypto wallets), and proposes models for how such payments can be managed safely. It also compares different approaches and outlines near-term solutions versus longer-term innovations.

- Introduction

- Core Challenges for Autonomous Agent Payments

- Tools and Mechanisms for Agent Payments

- Trust, Transparency, and User Control

- Adapting Existing Fintech Systems

- Role of AI and ML in Fraud Detection & Spend Control

- Conceptual Models for Agent-Based Payment Flows

- Short-Term Solutions vs. Long-Term Innovations

- Conclusion

- References and Links

Core Challenges for Autonomous Agent Payments

Enabling an AI agent to transact involves a convergence of technical, legal/regulatory, and ethical challenges. These must be addressed to ensure payments are executed correctly, securely, and in alignment with the user’s intent and society’s rules.

Technical Challenges

- Identity & Authentication: Financial systems rely on identifying who is making a transaction (via logins, cardholder info, etc.). An AI agent has no legal identity of its own – it operates under a user’s or organization’s identity. This creates ambiguity in authentication: how do we securely prove “this transaction was initiated by my authorized AI agent” and not a fraudster? Today’s payment products verify human identity (KYC, passwords, biometrics), which don’t directly apply to bots. We may need new authentication methods or digital certificates for agents. Some experts suggest extending the concept of Know-Your-Customer to “Know-Your-Agent” – verifying an agent’s identity/authorization as an intermediary acting for a user. Without robust identity, there’s risk of someone impersonating an agent or an agent going rogue.

- Fraud Detection & Security: Current fraud detection engines are tuned to flag non-human patterns (e.g. bots attempting card theft). In fact, a major theme in fraud prevention is distinguishing automated attacks from genuine users. If we want certain bots (our agents) to transact, those systems need to be retrained to differentiate “good bots” from malicious ones. This is non-trivial – it requires new behavioral models and possibly whitelisting or registering trusted agents. Additionally, agents can execute transactions faster and in greater volume than humans, which raises the stakes for any security lapse. A bug or exploit could trigger rapid, repeated payments. (A cautionary example is Knight Capital’s trading algorithm, which in 2012 went into an unintended loop and purchased $7 billion in stocks within an hour, nearly bankrupting the firm. Payment systems must guard against an agent executing incorrect instructions in a loop or being manipulated by adversarial inputs.

- Permissions and Limits: In human-driven finance, there are checks like daily transfer limits, spending caps, and step-up authentication for large amounts. With agents, we need analogous or even more granular constraints. We can’t simply remove limits to let agents operate freely – that could be dangerous. Instead, we need programmable limits tied to context and goals (e.g. an agent can spend at most $500/day or $200 per transaction, only at certain merchants). These limits might be dynamic: an agent could have a smaller limit initially which grows as trust is established. Defining and enforcing such conditional limits is a technical challenge that spans both the agent’s design and the payment platform.

- Integration with Payment Systems: Agents must interface with existing payment networks (cards, bank accounts, crypto networks). This requires APIs and credentials. Storing and using those credentials securely is critical – e.g. API keys or virtual card numbers must be protected so that only the agent can use them. Managing secrets in an autonomous environment (possibly open-source agents) is a challenge. Additionally, many payment APIs assume a human or traditional server is initiating them. They may require things like two-factor authentication or CAPTCHA at points, which an agent would struggle with. Technical work is needed to create agent-friendly payment APIs or tools that allow an agent to trigger payments in a controlled way (some progress is being made, as discussed later).

- Regulatory Technical Constraints: For certain payment methods, giving an agent access can invoke compliance requirements. For example, if an agent is directly handling credit card data (PAN numbers, CVV), it could bring the interaction under PCI-DSS scope (the Payment Card Industry data security standards). This means the agent’s environment would need to be secure and audited like any payment processor – a high bar for a general AI agent. One workaround is to use tokenization or virtual cards via APIs so the agent never “sees” raw sensitive data (thus offloading PCI compliance to the provider). Technical solutions like secure enclaves or hardware security modules might be employed to let an agent initiate a payment without exposing sensitive credentials.

- Reliability and Error Handling: An autonomous system must be robust against errors – e.g., what if a payment fails or an amount is wrong? Humans can notice and correct such issues; an agent needs logic to detect anomalies (like a charged amount vastly different from expected) and react appropriately (perhaps by aborting or seeking confirmation). Building this error-handling and reconciliation ability (e.g., verifying that a payment was successful and perhaps logging a receipt) is another layer of complexity.

Legal & Regulatory Challenges

- KYC and Ownership of Accounts: Financial regulations require that accounts and payment instruments are tied to real identities (for anti-money-laundering and fraud accountability). An AI agent cannot “own” a bank account or credit card in the legal sense; the account will be in the name of a human or organization. This means any transaction the agent makes is legally the responsibility of that account owner. Today, users might share their card number or API key with an agent, essentially making the agent an extension of themselves. But this raises questions: if an agent misuses funds, can the user claim it was an unauthorized transaction? (Likely not easily, since it was technically authorized to use the credentials.) Liability frameworks may need updating. Some jurisdictions might require explicit user consent for an agent’s actions, treating the AI like a very literal power-of-attorney or “delegate” of the user.

- Authorization & Consumer Protection: Many consumer protections assume a clear divide between user actions and fraud. If an agent makes a mistake (say, pays the wrong person or too much), it’s unclear how existing laws apply. For instance, credit card chargeback rights allow a consumer to dispute fraudulent charges – but if the “fraud” was an agent acting on bad info or a bug, the bank may say the charge isn’t unauthorized because the credentials were willingly given to the agent. We might need new rules or at least clear terms of service covering agent-initiated payments. Similarly, in the EU’s PSD2 regulations, Strong Customer Authentication requires two-factor auth for many online payments. If an AI agent is initiating a payment, it might not be feasible to involve the human for 2FA each time – regulators may need to create extensions or exemptions for pre-authorized autonomous payments (similar to how they treat recurring payments or trusted beneficiaries today).

- Regulatory Compliance (AML, Sanctions, Reporting): When money moves, regulations around anti-money laundering (AML) and sanctions screening apply. If an agent can pick payees autonomously, there’s a risk a compromised or poorly supervised agent could violate these laws (e.g., paying a blacklisted entity). Financial institutions will need to ensure that agent-initiated payments go through the same compliance checks as any other – perhaps even extra checks if the agent’s decision process isn’t transparent. Additionally, regulations like GDPR (data protection) come into play: an agent handling financial data must protect user information. If agents store payment info or make decisions using personal data, those activities must be compliant (for example, if an agent is paying people, it may handle their personal data like names, account numbers – raising privacy obligations).

- Lack of Legal Personality: By law, only legal persons (individuals or registered organizations) can hold assets and enter contracts. An AI agent, no matter how autonomous, is not a legal person; it’s more akin to a tool or software service. This means any contract or payment an agent enters is on behalf of a human/legal entity. There is ongoing debate in legal circles about whether highly autonomous systems should have some form of legal status (some have likened it to a corporate entity or a “electronic personhood”), but currently there is no such framework. In practical terms, this places responsibility on the user or company deploying the agent. But it also means agents cannot assume legal responsibility. For example, if an agent signs a payment agreement or terms of service on a website when paying, the enforceability depends on whether the human owner is bound by the agent’s actions. Clear agreements are needed where users delegate authority to agents, possibly via terms that “actions taken by the agent are as if done by the user.” Without such clarity, disputes could arise.

- Liability and Insurance: Who is liable if an agent causes financial loss? If it’s a bug in the agent’s software, the developer of the agent might be one party; the user who deployed it is another; the financial institution might also be involved. For instance, say an enterprise’s AI agent erroneously pays a fraudulent invoice, losing money – the company might blame the AI vendor, while the vendor might invoke usage disclaimers. We may need new forms of insurance or liability contracts for autonomous agents (similar to how self-driving car liability is being approached). Businesses might need to explicitly insure against “AI operational mistakes.” Legally, having the user in the loop (even if just to review) provides safer harbor, which is why many early implementations will keep a human approval step for significant transactions. Over time, if laws evolve to accommodate autonomous decisions, we may see more freedom, but in 2025 this area is still grey.

- Ethical and Compliance Alignment: Regulations also cover fairness and ethics in finance (e.g., fair lending laws, non-discrimination). If an AI agent is negotiating or making decisions involving payments (like deciding which supplier to pay or who to hire and pay), it must not violate these principles. Ensuring an agent’s decision-making process is auditable and free of illegal bias is important – a challenge that merges legal and ethical domains.

Ethical Challenges

- Trust and Consent: Handing over one’s wallet (even a limited one) to an AI requires a leap of faith. Ethically, the user must fully consent to what the agent is allowed to do. This means clear communication about the agent’s powers and limits. There’s a risk of over-delegation: users might not realize what they’ve authorized until after something goes wrong. Ensuring informed consent – possibly through dashboards or explicit policy settings – is an ethical imperative. If the agent is operating for a company, there’s also the trust of stakeholders (managers, finance department) that the agent will follow company policies. A history of reliability will be needed before people trust agents widely. Notably, consumer experiences with voice assistants (like Alexa’s failed attempt at automated purchasing) showed hesitation – fear of misalignment with user intent was a key reason features like voice-shopping didn’t take off. Users feared the AI “ordering things I don’t want” or making mistakes. Building trust will be a gradual, iterative process.

- Transparency and Explainability: An ethical agent payment system should be transparent. Users should be able to find out why the agent made a particular purchase or payment. If the agent subscribed to a service or paid a supplier, it should log its reasoning (e.g., “Service X was due for renewal and within budget, so I paid it to avoid interruption”). This traceability helps ensure the agent is acting in the user’s interest and allows auditing for any missteps. Opaque “black box” decisions that result in monetary loss are hard to accept. In sensitive contexts like healthcare payments or investing, explainability isn’t just nice-to-have but may be required for compliance or fiduciary reasons.

- Preventing Abuse and Misuse: There’s an ethical duty to ensure agents cannot easily be co-opted for wrongdoing. This includes external bad actors tricking the agent (through prompt hacking or manipulating its inputs) to send money to fraudsters. It also includes the possibility of the agent’s owner intentionally using it as a scapegoat for unethical behavior (“Oh, it wasn’t me who violated sanctions by paying that party, it was my rogue AI”). Designing the system such that all agent actions are traceable to an owner prevents a “blame the AI” escape. Moreover, developers of agent payment systems should include safeguards against known fraud tactics – for example, an agent might verify invoices or use sanity checks to avoid common scams (like “change of bank account” frauds). Ethically, if we create agents with financial power, we should imbue them with some fraud detection of their own or at least caution in suspicious scenarios.

- User Control vs. Autonomy: There’s a balance to strike between giving the agent autonomy (so it’s useful) and maintaining user control (so it’s safe). Ethically, the user’s autonomy and intent should remain primary. The agent is an instrument of the user’s will, not an independent economic actor with its own goals. This may sound obvious, but with advanced AI there’s always a concern about the agent developing unintended objectives or pursuing a task at all costs (the classic “alignment problem”). In finance, an misaligned agent could, say, decide the goal “minimize expense” means it should cut corners or refuse payments that are actually important (imagine an agent that tries to save money by not paying a crucial vendor on time). Ensuring that the agent’s objectives are properly set by the user and that it knows when to defer to human judgment is an ethical design issue. Part of this is incorporating fail-safes: for example, an agent might pause and ask for confirmation if it encounters a situation not covered by its training or rules (“This expense is unusual, do you want me to proceed?”).

- Impacts on Stakeholders: Autonomous payments can have ripple effects. In B2B, if one company’s agents automatically negotiate and pay invoices, suppliers might need their own agents to keep up – potentially shifting how business is conducted (speed, terms, etc.). Ethically, we should consider if smaller players or less tech-savvy users could be disadvantaged. Also, the employment impact: if AI agents handle accounts payable or procurement, human roles in those areas might change or diminish. Organizations deploying these agents have an ethical responsibility to manage the transition for employees (reskilling, etc.). Lastly, consider error impacts: if an agent makes a payment error, who bears the cost? Likely the user/company does – so users must be made fully aware of the risks and have recourse or compensation mechanisms for agent failures (perhaps through insurance as mentioned, or vendor guarantees). Transparency about these policies is part of ethical use.

In summary, while autonomous agent payments promise efficiency, they sit at the intersection of cutting-edge technology and legacy financial norms. Identity/authentication hurdles, new fraud vectors, unclear liability, and trust issues must all be tackled before agents can freely spend on our behalf. The next sections explore solutions and tools that aim to address these challenges.

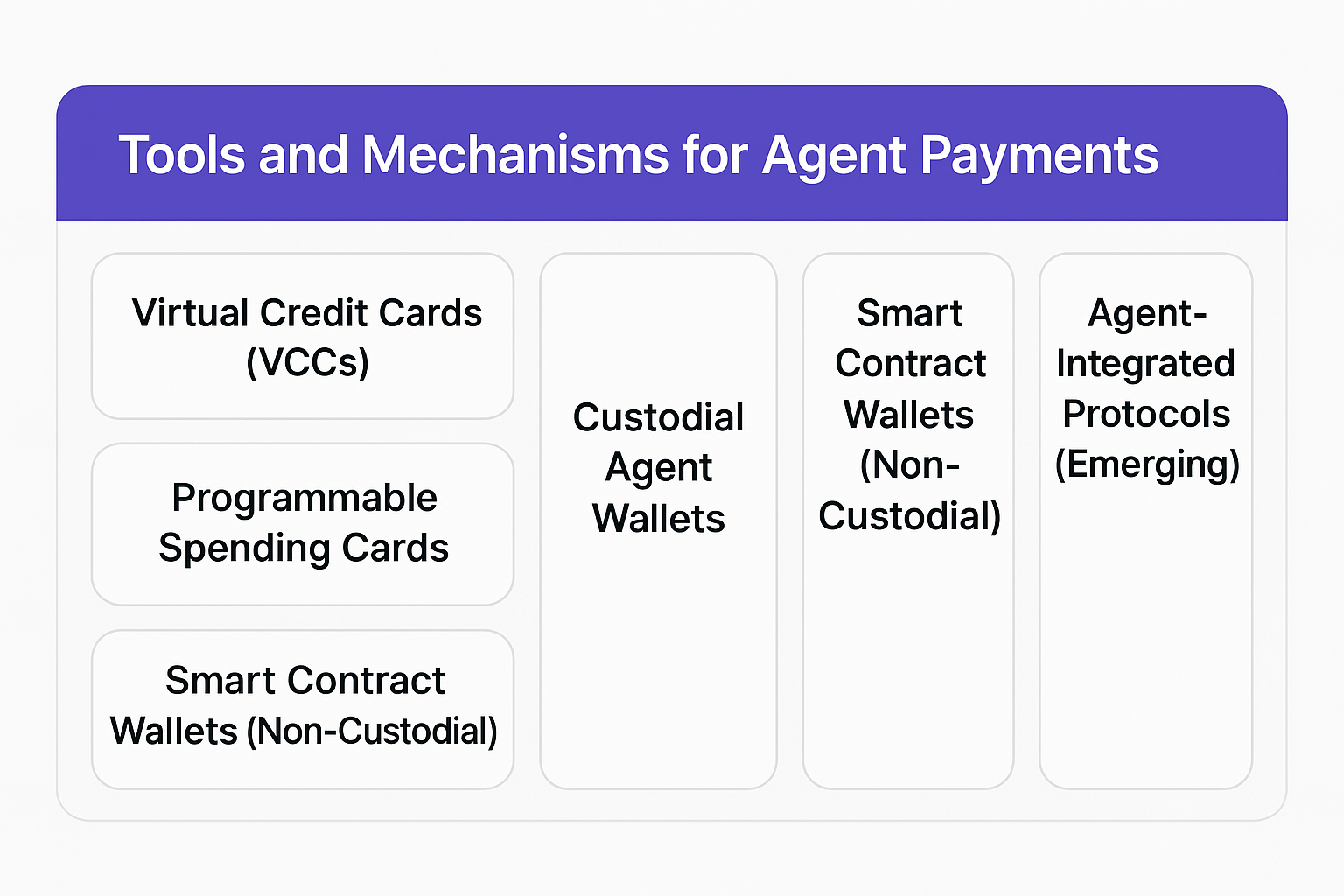

Tools and Mechanisms for Agent Payments

To empower AI agents with the ability to pay while managing the risks, a variety of financial tools and mechanisms are being explored. These range from leveraging existing fintech solutions (like virtual cards and spend management platforms) to using cryptocurrencies and smart contracts for more programmable control. Below, we examine key mechanisms and how they can be used in B2C, B2B, or enterprise settings to allow agent-initiated payments.

Virtual Credit Cards (VCCs)

Virtual credit cards are disposable or semi-permanent card numbers that can be created on-demand and tied to an underlying credit card or bank account. They carry a fixed spending limit and often can be restricted by use. For autonomous agents, VCCs are a straightforward way to grant spending access:

- How they work: A virtual card typically has a 16-digit number, expiration, and CVV like a normal card, but no physical form. It can be used for online payments. Many banks or services (e.g., Capital One Eno, fintechs like Privacy.com, or corporate card providers) let users generate virtual card numbers instantly via API or app. You can usually set a maximum charge amount or an expiry date for each number.

- Agent usage: An AI agent could request a virtual card number via an API call when it needs to make a payment. For instance, if an agent is tasked with purchasing an item up to $50, it could obtain a VCC limited to $50 and use it at checkout. Because the VCC is unique to that transaction or vendor, if anything goes awry (the number is stolen or the agent tries to overspend), the damage is capped. Stripe’s early foray into agent payments uses this method – Stripe’s developer toolkit for AI agents can generate virtual card numbers that the agent can use to pay external services. Essentially, the agent is given a temporary card with preset limits, solving the problem of how it “carries” a credit card.

- Benefits: Virtual cards leverage the ubiquity of the card network (nearly any online merchant accepts cards) while adding control. They can be single-use (one transaction then auto-close) or vendor-locked (only charges from a specific merchant ID will go through), which prevents misuse. Since they draw from an existing account, the user doesn’t have to pre-fund a new wallet; it’s using their normal credit line or balance, but safely partitioned. Risk is isolated: if an agent makes a mistake or a bad actor somehow gets the card info, that virtual card can be shut down without affecting the main account. Virtual cards are also familiar to the ecosystem, so using them is less likely to run into merchant-side issues.

- Drawbacks: VCCs share limitations of the card networks. They typically can’t be used for everything – for example, paying another individual (P2P) or a bank transfer. They are best for traditional purchase scenarios (buying goods/services online). Also, managing many virtual cards could become complex (though automation helps) – e.g., an active agent might generate dozens of card numbers per day; reconciling those to actual expenses and making sure unused ones are closed is a consideration. Additionally, while an agent can be given a VCC, some jurisdictions might still consider that “the user’s card.” If something goes wrong, the user is still on the hook for the charges initially. From a technical standpoint, using VCCs means the agent will handle card data – which as noted implicates security. Ideally the agent would receive the card number via a secure API and not log it or store it beyond the payment session.

- Use cases: In consumer (B2C), a personal agent could use VCCs to, say, order groceries or pay for online subscriptions on behalf of the user. The user’s bank app might let them create a virtual card for the agent with a monthly limit for groceries. In enterprise (B2B), an agent could use a virtual corporate card to auto-pay SaaS subscriptions or online services needed for business, each limited to an amount per vendor. Because VCCs can often be tagged with descriptors, companies can track which expense was by which agent.

Overall, virtual cards are a pragmatic short-term tool to let agents pay within controlled bounds. They piggyback on existing financial infrastructure (cards) while limiting exposure. As noted, Stripe and others are actively implementing this approach, indicating it’s one of the first viable steps for agent payments.

Programmable Spending Cards and Accounts

Moving beyond one-off virtual cards, there are platforms that allow fully programmable payment cards or bank accounts. These are highly relevant for giving AI agents ongoing spending capability with guardrails.

- Card Issuing APIs: Fintech services like Stripe Issuing, Marqeta, and others provide APIs to create and manage cards (both virtual and physical) with custom rules. For example, Stripe Issuing’s API allows developers to create employee expense cards, set spend limits, and even restrict merchant categories. Through these APIs, you can do things like: “Create a card for Agent X, allow only $1000 total spend, max $100 per transaction, only at travel-related merchants (hotels, airlines), and have it expire in 60 days.” This is powerful for enterprise use. An AI agent given such a card effectively has a company-sanctioned credit card, but one that the company can monitor and control in real-time. If the agent tries something outside its bounds, the transaction simply declines. This is similar to how companies issue corporate cards to employees with limits – but here the “employee” is an AI. The difference is, with an AI we might want even tighter control (like per-transaction approvals or automated freezes on certain triggers). The API nature of these services means the controls can be adjusted dynamically by software, which is ideal for integration with an AI governance system.

- Approval and Limit Workflows: In an enterprise scenario, you could integrate an agent’s spending with approval workflows. For instance, an agent needing to make a $5,000 purchase might automatically generate a request that goes to a manager’s queue; once approved, the agent’s card limit could be temporarily raised or a one-time token issued to complete that purchase. Some modern spend management platforms (Ramp, Brex, etc.) already allow setting approval requirements for employee-initiated transactions – adapting these for AI agents is a small leap. In fact, giving an AI its own card under the company’s account is analogous to giving a new team member a card, just with perhaps more stringent rules.

- Neobank Accounts with APIs: Similarly, on the banking side, some banking-as-a-service providers let you create sub-accounts with their own balances and transaction limits. An AI agent could be assigned a sub-account; it can only spend funds from that account. The business or user periodically tops up the sub-account (creating a budget envelope, effectively). The agent uses payment APIs (ACH transfers, wire, instant payments) from that account. Because the account is separate, if the agent messes up, the damage is confined to that sub-account’s balance. The controls at the bank level (like not allowing overdraft or flagging unusual payees) still apply. This is more relevant for B2B payments where card rails might not be used (e.g., paying invoices via bank transfer).

- Programmability and APIs: The key advantage of using these modern fintech solutions is flexibility. We can programmatically set rules like: spending limits that reset periodically, merchant whitelists/blacklists, time-based restrictions (maybe an agent can only spend during business hours or only this week), and multi-factor authentication triggers (e.g., require a second API call confirmation for transactions over X). For example, infrastructure is emerging that can “generate single-use payment credentials, set strict spending limits, restrict transactions to specific merchants, and require human approval above thresholds” – exactly the toolkit needed to manage agent spending. These capabilities, once integrated, give fine-grained control to balance autonomy and safety.

- Transparency and Monitoring: Cards and accounts issued in this way can feed into dashboards and reports. So, a company could in real-time see Agent A spent $200 on AWS, Agent B spent $50 on an API service, etc. This real-time visibility is crucial for trust (addressed more in the next section on transparency). For instance, one startup (Skyfire) that focuses on agent payments provides a dashboard to “view exactly how much, and where, their agent is spending.” Such dashboards likely leverage the underlying card/account APIs to pull transaction data.

- Examples: A concrete scenario: imagine an internal enterprise agent that auto-scales cloud infrastructure. It needs to pay the cloud provider when new servers are added. The company could issue a virtual card to this agent with a monthly cap of say $10,000. The agent’s code uses this card to pay the cloud bills as they come due. The system might restrict the card to only work with that cloud provider (merchant lock) for security. If the cloud bill spikes unexpectedly, the agent might hit its limit and then pause or seek approval, rather than bankrupting the budget. Another example on the consumer side: an AI shopping assistant on your phone could have a “virtual debit card” linked to your account but with maybe $200 limit per week and perhaps only for a list of stores you approved. If the AI finds a good deal and wants to purchase it for you, it uses that card – you get an immediate notification and can intervene if needed. If everything is good, the purchase goes through under the hood; if not, you cancel the virtual card.

- Challenges: While powerful, these programmable solutions require that the user or company is able to get access to such an API platform. Large enterprises might partner with fintech providers or use in-house solutions. Individual consumers have fewer options, though some challenger banks and budgeting apps do give multiple virtual card features. Another challenge is ensuring the programming of limits truly aligns with the agent’s goals – setting them too tight might cause the agent to fail at its task, too loose and you defeat the purpose. There’s also the risk of complexity: managing potentially many rules across many agents could get unwieldy, calling for an “agent spend policy manager” of sorts in the future.

In summary, programmable cards/accounts provide a robust way to give agents spending power with guardrails. They effectively let us “codify policy in the payment instrument” – a very direct method of risk mitigation. This approach is well-suited for enterprise and high-trust scenarios where the overhead of setting up these systems is justified by the value of automation.

Cryptocurrency Wallets and Smart Contracts

Cryptocurrency and blockchain-based payment systems offer an alternative route for agent payments. They are inherently digital and programmable, which aligns well with autonomous agents. Two main modes exist here: custodial wallets (where a third-party service holds crypto or fiat for the agent) and non-custodial smart contract wallets (where the agent itself interacts with a blockchain directly, under set rules).

Custodial Wallets (Including Stablecoins): A custodial setup means a service (such as a crypto exchange or a specialized payment platform) holds funds for the user and exposes an API for the agent. The funds could be in fiat currency or tokenized as stablecoins for on-chain use. The agent doesn’t hold the private keys; instead, it has API credentials to instruct the custodian to send payments. For example, an agent could use an exchange’s API to send 0.1 ETH to an address to pay for some service, or use a stablecoin custody service to pay $100 in USDC to a supplier’s wallet. The advantage is the custodian handles all the blockchain interactions, security of keys, and often compliance (KYC/AML on accounts). This is similar to how a traditional bank API might work, but with crypto as the medium one step in. Skyfire’s network is an example: businesses deposit USD with Skyfire, which converts it to USDC (a stablecoin) under the hood and assigns it to the agent’s digital wallet, then the agent transacts in USDC on the network. From the user perspective, it’s like a special bank account for the agent; under the hood it’s crypto facilitating fast, programmable transfers. Custodial approaches can also seamlessly convert back to fiat – e.g., the agent could pay a vendor in crypto which the vendor’s side immediately converts to local currency.

- Pros: The heavy lifting (security, speed, compliance) is handled by the service. The agent just calls an API – simpler integration. Also, transactions can be instant and global if using crypto rails (no waiting for bank hours). Custodians can often provide added safeguards: for instance, they might implement spending limits or risk checks on outgoing transactions (much like a bank would).

- Cons: Trusting a custodian means you rely on that third party’s solvency and security. It also means the user doesn’t fully control funds – if the custodian has an outage or freezes the account suspecting issues, the agent is stopped. Additionally, using a custodian often involves fees (Skyfire, for example, charges a percentage per transaction. From a regulatory view, custodians are typically regulated entities (money transmitters or the like), which can be positive (more accountability) but also could limit availability (KYC requirements, not available in all regions, etc.).

- Use cases: A business might use a custodial wallet service to give its AI agent a small treasury in crypto to automate micropayments across borders (since a stablecoin transfer can be cheaper and quicker than international bank payments). Or an individual could top up an agent’s account on a platform with $100 in stablecoin to let it buy digital goods or IoT services that accept crypto. Custodial solutions often act as a bridge – e.g., the agent pays a SaaS API in crypto via the custodian, and the SaaS provider might auto-convert that to USD on their end. This offloads the need for both sides to handle multiple currencies.

Smart Contract Wallets (Non-Custodial): In a non-custodial approach, the agent (or user) holds the private keys to a crypto wallet – ideally a smart contract-based wallet that can enforce rules. Blockchains like Ethereum support smart contract accounts where one can encode spending policies: multi-signature (requiring multiple approvals), daily spending limits, whitelisted addresses, and more. By giving an agent access to such a wallet, you can imbue it with programmatic constraints at the wallet level. For example, a smart wallet could be coded to allow at most 1 ETH of spending per day and only to certain contract addresses (perhaps known service providers). If the agent tries to send more, the blockchain transaction itself will fail the rule, no central authority needed. This is powerful because it’s trustless enforcement – even if the agent is compromised, it cannot violate the hard-coded limits of the wallet. Multi-signature is another feature: you could set it so that any transaction above a threshold requires a second signature (which could be the user’s mobile wallet or a co-signer service). This is analogous to requiring human approval for big spends, but executed via cryptographic rules. Smart wallets can also have social recovery or admin keys, so the user can revoke the agent’s access if needed by changing keys.

- Pros: Extremely flexible and autonomous. The wallet can operate 24/7 and doesn’t rely on third parties once deployed. Payments can be fully programmable: you could implement complex logic like “allow $X per day unless a specific event, then allow $Y,” etc. Also, crypto wallets can interact directly with decentralized services – for example, an agent could not only pay but also enter into smart contracts, escrow funds, trade on decentralized exchanges, etc., all under predefined constraints. This opens the door to agent-driven DeFi activities (though that’s an advanced scenario). With blockchain, microtransactions are feasible (depending on fees) and can be as low as fractions of a cent on some networks – enabling pay-per-task at scale. Another benefit is that on some newer networks (or with Ethereum’s upcoming account abstraction features), one can pay transaction fees in stablecoin or have fees sponsored, making it easier for an agent to operate without needing to manage gas in multiple tokens.

- Cons: The main challenge is that the wider world still runs on fiat. If an agent’s wallet is full of crypto, but the vendor it needs to pay doesn’t accept crypto, you need a bridge (which often means a custodian or a crypto card). There’s progress – for instance, Visa has piloted letting businesses settle in USDC stablecoin, which blurs the line – but generally an on-chain payment will eventually hit an off-chain interface. Another con is complexity: developing and auditing a secure smart contract wallet is non-trivial. Bugs in the wallet code could be disastrous (though one can use standardized, audited templates). Also, users have to manage keys – if the key controlling the agent’s wallet is lost or compromised, funds can be lost (though social recovery can mitigate that). In terms of regulation, non-custodial crypto is more on the user – you’re bypassing regulated intermediaries. This gives freedom but also responsibility to follow laws like reporting gains, ensuring you’re not transacting with sanctioned addresses (tools exist for compliance on-chain, but it’s a new area). Scalability is another consideration: major blockchains can have congestion or high fees at times, which could hamper an agent that needs frequent transactions – but layer-2 networks and alternative blockchains can address this (and many agent applications could run on those).

- Use cases: A possible use case is machine-to-machine (M2M) payments. Imagine an electric car that is an autonomous agent: it could have a smart contract wallet that automatically pays charging stations or tolls in crypto as it uses them. Each payment might be tiny, but executed on the fly without human involvement, using a predefined budget in the wallet. Another use: An AI content creator agent that sells its outputs could accept crypto from buyer agents and automatically split the revenue (maybe sending a portion to its owner, a portion to a developer, etc.) according to a smart contract. This is essentially an on-chain business run by agents. While these scenarios are still experimental, the autonomy and programmability of smart contracts align naturally with autonomous agents, as noted by various industry experts who see blockchain as a key infrastructure for the “AI agent economy.”

It’s worth mentioning that hybrid models exist too: for example, an agent could use crypto for certain transactions (for programmability or global reach) and traditional payments for others, depending on context. Also, crypto protocols are evolving that specifically cater to agents – e.g., projects that let agents have decentralized identities and reputation on-chain, which can be tied to wallets for trust.

In summary, crypto wallets (especially smart contract-based) offer the maximum programmability and global interoperability for agent payments, which is a huge opportunity. They remove many constraints of legacy systems (no need to fit into human-oriented anti-bot measures, no 9-5 banking hours, etc.). However, integrating them with the existing economy and ensuring security introduces new challenges. Custodial wallets serve as a more immediate stepping stone, bridging between the traditional fiat world and the crypto world – giving agents some benefits of crypto (like instant settlement) without all the complexity, at the cost of some centralization. As the landscape matures, we may see increasing use of crypto rails for agents, especially for microtransactions and cross-border interactions where they hold strong advantages.

(Next, we consider how to maintain trust and control when using these payment tools, and how existing fintech infrastructure can adapt to support agent transactions.)

Trust, Transparency, and User Control

Because autonomous payments can be risky, implementing strong trust and control mechanisms is critical. The user (or business) needs confidence that the agent will act only within approved bounds and that they can monitor and override its actions. Here we discuss how to achieve transparency and maintain user control, even as agents operate with some independence.

- Granular Spending Controls: As introduced earlier, one of the primary ways to trust an agent is to limit what it can do. This includes setting spending limits – e.g., an agent can spend at most $X per day or per week. Many implementations are doing exactly this. For example, Skyfire’s agent wallets allow businesses to deposit a set amount and set limits on how much an AI agent can spend in one transaction and over time. If the agent tries to exceed those limits, the system will block it and “ping a human to review it.”. This kind of control ensures that even if an agent misbehaves or is hacked, it cannot drain more than the allotted amount without human intervention. Besides monetary limits, other dimensions of control include merchant or category restrictions (the agent can only spend at approved vendors or types of services), and time-based restrictions (e.g., only allow transactions during certain hours or only allow a certain number of transactions per day). The use of single-use or task-specific credentials enhances this: if each payment requires generating a new virtual credential with tight limits, the scope of any single failure is contained. Essentially, these controls act as automated approval policies that the agent must live within.

- Real-Time Alerts and Approvals: Even with limits, it’s important the user is kept in the loop, especially for larger or unusual transactions. Agents should be integrated with notification systems – e.g., push notifications or emails to the user whenever the agent spends money. This mirrors what many banks do for card transactions, but here it’s even more critical as the user didn’t directly initiate the action. If an agent attempts something outside its normal pattern or above a threshold, an approval workflow should kick in. The earlier example from Skyfire shows one approach: attempts over the limit trigger a human review. Similarly, the list of controls from Stripe’s toolkit and others includes “require human approval above thresholds.”. This could be implemented via a mobile app where the user taps “allow” or via an email link, etc. The goal is to stop runaway spending while minimally impacting useful autonomous operation. Over time, as trust builds, some thresholds might be raised, but the mechanism stays as a safety net.

- Transparency Dashboards: Users and organizations will want to see what agents are doing with funds at a glance. A dashboard that shows all agent transactions, budgets remaining, pending approvals, and any policy violations is essential. This is already part of some solutions – e.g., Skyfire offers a dashboard for exactly this. In an enterprise scenario, such dashboards might integrate with existing expense tracking or ERP systems. They not only build trust (because everything is visible) but also help in auditing and accounting. For instance, an enterprise could require that the agent attaches a note or a receipt for each payment (the agent could be programmed to save confirmation pages or invoices). These records then flow into accounting systems like any other expense. Transparency also means clarity on the agent’s authority: clearly delineating which payments were made by AI (perhaps tagged differently in statements) versus by humans could be part of compliance reporting. Some financial institutions might even mark transactions initiated via an API/agent with a flag in their data – allowing different handling or filtering.

- Know Your Agent (KYA) and Verification: As mentioned, financial institutions are considering services akin to KYC but for AI agents. The idea is to have a trusted third-party or the financial institution itself validate an agent’s identity and the link to its owner. This could involve issuing a digital certificate or token to the agent that it presents with each transaction (proving “I am the AI agent authorized by Alice, client of Bank X”). If widely adopted, this could greatly improve trust in the ecosystem: merchants and banks could accept payments from verified agents more readily. Mastercard, for instance, has hinted that verifying agents could be a new role for financial institutions. In practice, this might mean when you create an agent, you register it with your bank which then gives it a sort of sub-identity. Later, the bank can assure any recipient that “Payment coming from Agent123 is backed by Alice’s account and policy.” This prevents random bots from masquerading as legitimate. It’s a form of digital identity for non-human actors. While still conceptual, it aligns with how trust could be scaled – similar to how SSL certificates let web servers trust each other, agent certificates could allow payment networks to trust agent-initiated actions.

- User Override and Emergency Stop: At any point, the user should have the ability to pause or stop an agent from spending. This might be as simple as a “freeze card” button that instantly shuts off the agent’s virtual card or empties its budget to $0. It could also be a “pause agent” command that the agent’s platform listens to, causing the agent to halt all operations (not just payments). Knowing that they can quickly intervene gives users confidence to let the agent act in the first place. Additionally, there should be clear procedures if something goes wrong – e.g., how to dispute a payment the agent made in error, how to recover funds (if possible), or how to hold the agent’s creator accountable if it was a software flaw. In enterprise settings, this might translate to an admin console where administrators can disable an agent’s financial privileges if it behaves unexpectedly.

- Audit Trails and Accountability: Every payment an agent makes should have an audit trail attributing it to the agent (and thus the user/company). For internal use, this helps in tracing decisions (“we paid this supplier because the agent thought stock was low, here’s the log”). For external accountability, if an agent ever does something malicious (say it was subverted to launder money), investigators need a trail to the owner. Embedding metadata in transactions that reference the agent ID (where possible) or logging it in internal bank logs can facilitate this. It’s part of transparency – not only does the user see what the agent did, but the system as a whole keeps a record for accountability. This is analogous to having CCTV in a store where a robot is operating – you trust it more if you know there’s a record of its actions.

- User Education and Control Interfaces: To truly feel in control, users must be educated on how the agent operates and what controls they have. This means the UI/UX around agent payments is important. For example, a user should easily grasp the budgets and limits in place (perhaps with a simple slider or setting: “Agent can spend up to $50/week”). They should receive clear notifications of agent activity in plain language (“Your travel agent bot spent $300 on Delta Airlines for your flight to NYC”). If something looks off, the user should know how to query or correct the agent. In some cases, providing an explanation interface – the agent could be asked “Why did you make this payment?” and it should answer – can greatly enhance trust through transparency. It ties into the explainability point in ethics.

- Building Trust Gradually: Initially, many users will likely only be comfortable with agents making very small or low-stakes payments. Over time, as they see the agent perform reliably, trust can increase. Systems should allow this gradual escalation: maybe start with a $20 limit, then $100, then higher as needed. Each successful cycle where the agent stays in bounds will build trust. It’s similar to how one might start an intern or new employee with small purchasing authority and increase it as they prove trustworthy. The difference is an AI agent’s trustworthiness is a function of its design and testing, but from a user perspective, seeing it “in the wild” behaving correctly is crucial. Providers of agent payment tools might even incorporate trust scoring – e.g., an agent that has operated for 6 months without issues could be given a higher trust level which streamlines some of its transactions.

In essence, no one wants a runaway AI with a blank check. By imposing strict limits, monitoring continuously, and creating mechanisms for human oversight, we ensure that users remain in charge of their agents’ finances. The combination of preventative controls (limits, whitelists), real-time oversight (notifications, dashboards), and after-the-fact accountability (logs, audits) addresses the major trust barriers. Transparency features – from dashboards to potential KYA certifications – give all parties (users, banks, merchants) confidence that an agent’s money is good and its behavior is accountable. These measures, many of which are being incorporated into early agent-payment offerings, make the difference between a terrifying prospect of uncontrolled AI spending and a practical, manageable tool that extends human capability.

Adapting Existing Fintech Systems

The current financial ecosystem – banks, payment networks, fraud engines, etc. – was not built with autonomous AI agents in mind. To accommodate agentic payments, these systems will need to adapt. Fortunately, many modern fintech systems are flexible and software-driven, which means they can evolve relatively quickly. Here’s how key components of the financial world could be adapted or leveraged to support autonomous agents making payments:

- Banks and Neobanks with Open APIs: Traditional banks often have rigid processes for payments and may not allow third-party automation easily, but the rise of open banking and Banking-as-a-Service means more banks provide APIs. Neobanks (digital-first banks) in particular usually expose RESTful APIs to create accounts, initiate payments (ACH, SEPA, wire), and check balances. By using these, an agent can interact with a bank almost as a human would, but programmatically. For example, a neobank could let a user create multiple sub-accounts via API; a user could designate one sub-account for an AI agent and set its balance as the agent’s budget. The agent, via an API token, could initiate payments from that sub-account to vendors. This essentially uses existing bank plumbing (like ACH transfers) but in a controlled sandbox (the sub-account). Many banks already allow scheduling payments or rule-based triggers (like “sweep money if balance above X”); extending this to respond to agent requests is conceivable. What might banks need to change? Perhaps their risk models for API usage – if an agent is hitting the API frequently to, say, pay dozens of $1 invoices, the bank’s systems should recognize this as intended activity, not fraud. Banks might also develop new account types or user roles for AI agents – e.g., sub-accounts tagged as “bot account” that have certain restrictions. Neobanks and BaaS providers are likely to lead the way, as they can implement these features faster and partner with fintech developers to support agent use cases.

- Card Networks and Processors: Visa, MasterCard, and card processors (Stripe, Adyen, etc.) currently treat most transactions as originating from a human cardholder. As autonomous usage grows, networks might introduce flags in the transaction data to indicate an AI agent is the one initiating. Why? Because then if a transaction looks bot-like (fast, repetitive), the network won’t automatically decline it thinking it’s fraud – it knows this is an authorized agent. They might also adapt their authorization protocols: for example, an agent could sign transactions with a digital certificate that the processor can verify (a bit like how 3D Secure verifies an issuer knows the customer). Mastercard’s concept of “KYA” identification services suggests they foresee a role in helping authenticate agents to merchants. On the acquiring side (merchants), payment gateways might need updates too – e.g., some e-commerce sites use CAPTCHA or other bot detections at checkout. If the agent is a “good bot” the merchant wants to allow (because it’s a customer’s agent), there may need to be a way to bypass such measures. Perhaps an API-based checkout flow for agents separate from the web UI. Stripe, for instance, has been exploring optimized checkouts for AI agents. If this becomes common, card networks may formalize standards for machine-initiated transactions, which could include extra data fields like “agent ID” or a tag indicating no 3D Secure challenge needed due to pre-authorization. It’s analogous to how recurring transactions have indicators so they are not declined for lack of 3D Secure each time. In short, networks can adapt by recognizing new categories of payers (agents) and adjusting fraud rules, authentication steps, and data formats accordingly.

- Fraud and Risk Engines: The fraud prevention systems used by banks and payment processors will need re-tuning. Currently, if they see a flurry of transactions from one account in seconds, or perfectly regular interval payments, they might flag it as a bot or script attack. In the agent world, that might be normal (e.g., an agent pays 100 microtransactions per minute for an API usage fee). These engines have to learn what “normal” looks like for each authorized agent, which could be very different from human patterns. This likely means applying machine learning on transaction data to cluster and identify agent behavior profiles vs human profiles. They need to flip the paradigm from “block anything that looks automated” to “block anything that looks maliciously automated, but allow known-good automation.” This is a nuanced challenge. One approach is incorporating the agent’s identity and history into fraud scoring: if an agent is known and has a whitelist, transactions matching its expected profile (right merchant, under limit, etc.) get a low risk score. If something falls outside (wrong merchant or time), even if an agent is sending it, it could indicate a compromise and thus be flagged. We might also see entirely new fraud startups focusing on agent transactions – as QED investors speculated, separating good bots from bad bots is a new fraud paradigm to conquer. Traditional players like Stripe have sophisticated fraud systems (Radar) which they’ll likely extend to agent-based scenarios. In practice, an adaptation could be as simple as adding a setting: “this API key corresponds to an AI agent for user X, allow higher frequency transactions but enforce these specific rules…”. Over time, as data accumulates, AI/ML models can be trained specifically on agent behavior to detect rogue events (like an agent suddenly sending funds to an unusual recipient might indicate it’s compromised or acting out of scope). In essence, fraud engines will move from a binary human/bot decision to a multi-class decision: human, trusted agent, unknown automation, malicious bot, etc., each with different handling.

- Enterprise Systems (ERP, Procurement, Accounting): Within companies, payments by agents should integrate with existing workflows. For example, if an AI agent is ordering supplies and making payments, those need to show up in the accounting system with proper coding (which department, which expense category, etc.). Enterprises likely will adapt their ERP to treat an AI agent somewhat like an employee or a procurement system user. Policies that already exist (like “purchases over $1000 require manager approval”) should be enforced on agents too. This can be done by connecting the agent to the same approval workflow tools. For instance, if an agent wants to pay an invoice, it could first create a request in the ERP; once approved, it gets a green light to actually execute the payment. Over time, if trust in the agent is high, the ERP might auto-approve routine things (like invoices under a certain amount that match a purchase order) for the agent, whereas a human might have needed to click approve. Additionally, internal audit and compliance teams will adapt by auditing agent decisions just as they would a human’s – maybe even more rigorously at first. This might involve reviewing the agent’s logs, ensuring the rules set up for it match company policy, and testing it (audit might simulate a scenario to see if the agent appropriately flags something). Fortunately, many enterprises already use a lot of automation in payments (like scheduled vendor payments, rule-based invoice approval), so an AI agent extends that rather than introduces something completely foreign. It’s more about the AI making judgment calls (like selecting a vendor or timing a payment) which previously were human decisions. Ensuring those align with internal controls is the focus.

- Tooling for Dynamic Governance: We touched on dynamic spend governance earlier in concept; here’s how existing systems could help. Some payment platforms allow dynamic rules – e.g., one could integrate a machine learning model in a decision engine that decides to approve or decline transactions in real-time. These decision engines (offered by companies like Feedzai, Featurespace, or even in-house at banks) could incorporate an agent’s context. For example, an enterprise could feed data to the engine like “Agent A’s current task load is high, it’s expected to spend more on cloud services today” so the engine doesn’t flag the surge as fraud but still watches for other anomalies. AI can also help govern budgets by predicting if an agent might exceed budget given its current run rate, and alerting finance proactively. Existing spend management tools (like those that track monthly burn rate) can be adapted to include agent spending and even simulate scenarios (“if we let the AI order inventory autonomously, what’s the projected spending?”). Essentially, existing fintech and finance tools will need small tweaks to accommodate an additional actor (AI agent) in the workflows, and possibly more real-time, event-driven handling rather than end-of-month reporting. The good news is that the trend in fintech has been towards more automation and real-time control anyway, so agentic payments push further in a direction already underway.

- Network Collaboration: Adapting systems is not just within one bank or company – there may be industry-wide collaboration needed. For instance, creating standards for agent identity (as noted, possibly via something like a KYA service) might involve banks, tech companies, and regulators working together. Payment networks might develop best practices for merchants to handle agent-driven transactions (like how to design an API for an “agent checkout”). Fraud info sharing might also expand: if one bank identifies a pattern of a malicious AI script trying stolen cards, that intel can be shared across the network to blacklist that agent fingerprint. Organizations like PCI Security Standards Council might eventually consider guidelines for machine-to-machine payments security. In adapting existing systems, human cooperation and setting common rules is as important as the tech adjustments.

In summary, existing fintech infrastructure doesn’t need a complete overhaul to accommodate AI agents, but it does need tweaks and new layers. Open APIs and banking services provide entry points for agents, card networks and fraud systems need to update their models of “normal” behavior, and enterprise processes must loop agents into their controls. Many of these adaptations are extensions of digital banking trends (API banking, customizable card controls, real-time risk scoring). The unique twist is accommodating an autonomous decision-maker. The faster incumbents adapt, the less friction agent payments will encounter. It’s likely we’ll see innovative fintechs bridging gaps in the meantime – for example, companies offering “agent payment as a service” that wrap around existing rails to make them agent-friendly (some startups are already essentially doing this, by building on top of Stripe/Bank APIs as a wrapper for agents. Over time, if agent-based commerce becomes significant, the mainstream players (banks, networks) will natively support these use cases, just as they eventually embraced online payments and mobile wallets in the past.

Role of AI and ML in Fraud Detection & Spend Control

Ironically, while AI agents introduce new risks in payments, AI and machine learning themselves are key tools to mitigate those risks. AI/ML can enhance fraud detection, enforce dynamic controls, and even supervise agent behavior in real-time. Here’s how advanced algorithms play a role in keeping agent-initiated payments safe and well-governed:

- “Good Bot” vs “Bad Bot” Classification: As previously mentioned, a crucial challenge is distinguishing legitimate agent transactions from malicious ones. Traditional systems often labeled anything non-human as “bad,” but now we have a class of “good automation.” Machine learning can be trained to recognize patterns of trusted agents. This might involve features like: transaction timing patterns, typical merchant categories, amount ranges, IP address or environment from which requests originate, etc. Over many observations, an ML model could build a profile for each agent. For example, Agent Alice usually makes small purchases in US dollars, between 9am-5pm, from servers on AWS in the US. If suddenly a transaction comes at 3am from an IP in another country for a large amount in a different currency, the model would flag it as outlier behavior (potentially a hijacked agent or fraudulent attempt). Conversely, the model would learn to approve transactions that fit the agent’s normal pattern very quickly and with low friction. This dynamic profiling is more flexible than static rules (which might be too strict or too loose) and can adapt as the agent’s role evolves.

- Adaptive Fraud Detection: Fraud schemes will adapt to agents as well – for example, criminals might target vulnerabilities in how agents operate. AI-driven fraud detection can notice subtler clues than rule-based systems. For instance, if multiple agents across different users start making a similar odd payment, that might indicate a widespread attack (like an injection of a malicious prompt across the platform). Anomaly detection algorithms could catch that emerging pattern and alert operators. Additionally, AI can fuse data points: not just transaction data, but maybe logs from the agent’s decision process (if accessible) or other contextual info. For example, if an enterprise agent suddenly makes an unauthorized payment, an AI system might correlate it with a spike in network traffic or error logs on that agent, suggesting it was compromised by malware – something a transaction-only fraud check wouldn’t know. Thus, AI systems overseeing agents might integrate multiple data streams (cybersecurity logs, agent behavior logs, and financial transactions) to make holistic decisions about risk. This is an advanced form of fraud detection that blends IT security and payment security – likely important in enterprise contexts.

- Dynamic Spend Governance: Traditional spending controls are static (fixed limits, etc.), but AI can make them dynamic. For example, suppose an agent normally shouldn’t spend over $1000/day, but today it’s encountering an emergency (say, cloud costs spiked due to a DDoS attack it is mitigating). A dynamic system might recognize the anomaly in context and temporarily raise the agent’s spending cap to allow it to handle the situation, while simultaneously flagging this to admins. This could prevent a rigid rule from crippling operations, yet keep oversight. Conversely, if an agent that usually spends $1000/day suddenly has no legitimate need (as per its task pipeline) but tries to spend $5000, an AI system might intervene and lower the thresholds or require extra verification because it predicts this is likely an error or malicious. These adjustments can be done by predictive models that forecast an agent’s spending needs and compare to set budgets. If the forecast exceeds budget, it can alert or adjust. If actual spending deviates from forecast in a concerning way, it can clamp down. Essentially, it’s a feedback loop where AI monitors and tunes the agent’s “leash” in real time.

- AI as a Watcher Agent: We can think of deploying AI to watch AI. For instance, a “guardian AI” could monitor transaction streams and agent communications to ensure compliance with rules. If something looks off, the guardian can step in or notify a human. This is analogous to human managers overseeing employees, but here an AI manager oversees AI workers. In finance, this could be very effective – an AI overseer could check every proposed payment against a set of complex rules and contexts far faster than a human could. We already see glimmers of this in some systems: for example, AI that monitors trading algorithms to prevent accidents. A guardian AI might catch that an agent is repeatedly trying a payment that’s failing and pause it (to avoid, say, dozens of duplicate charges), or notice that two different agents are interacting in a way that could be collusive or unintended. While somewhat futuristic, it underscores that as we give AI agents autonomy, we can also employ AI oversight to manage that autonomy at scale.

- Preventing Fraudulent Agents: Another angle is using AI to scrutinize the agents themselves when they’re onboarded. If third-party developers create AI agents (say an app or skill you install), one might use AI analysis to vet the code or behavior in a sandbox. This could catch if an agent is likely to misbehave (for example, if it tries to send data to an unknown server or make external calls it shouldn’t). Though more about cybersecurity, it feeds into payment protection by reducing the chance a malicious or poorly written agent ever gets control of funds. AI tools for code analysis or policy compliance could be part of the agent deployment pipeline, especially in enterprises that might use many AI agents from various vendors.

- Continuous Learning and Improvement: As agentic payments are new, there will be a learning curve. AI/ML systems excel at improving with more data. Every incident (be it a false decline, a fraud case, or an agent error) can be fed back into the model to refine it. Over time, the fraud detection AI might discover new features that indicate risk (maybe something like “when Agent X’s payments deviate by >Y% from its 7-day moving average, with no corresponding increase in its input workload, there’s Z% chance of error”). These insights can then translate into better rules or alerts. Machine learning models could even personalize their strategies to each user’s risk tolerance: one user might prefer the AI be very tightly watched (risk-averse), another might accept more risk for smoother operation. The models could adjust thresholds accordingly if instructed (similar to how some credit card fraud systems let you set travel notifications or turn off certain protections if you want fewer declines).

- RegTech and Compliance AI: Beyond just fraud, AI can help ensure regulatory compliance dynamically. For example, AI could screen agent payments against sanction lists in real-time (many banks already use AI for transaction monitoring). If an agent tries to pay an entity that’s newly added to a blacklist, AI can catch that instantly. AI can also check if an agent’s pattern of payments might be structuring (breaking payments into small pieces to avoid reporting thresholds) – perhaps not intentional by the agent, but could resemble money laundering behavior. By catching such patterns, the system can stop an agent from unintentionally causing a compliance issue for the user. This is especially relevant for enterprise agents handling large flows of money – the company must remain compliant with AML laws even if an AI is initiating the wires. Machine learning models trained on typologies of illicit finance could monitor agent transactions just as they do human ones.

In summary, while on one hand we’re tasking AI agents to spend money, on the other hand we’re deploying AI to oversee that spending. This creates an interesting layered AI system – one doing the work, another (or several) watching the work. The goal is a self-reinforcing safety net: as agents get smarter and potentially more complex, so do the systems that guard them. Machine learning is essential to adapt to the new patterns that will emerge with autonomous payments, because hard-coding all scenarios is impossible. By continuously learning, AI-driven governance systems can handle novel situations and evolve rules that humans might not have conceived upfront. Early examples in industry already emphasize profiling agent behavior and doing real-time risk assessment, which are classic ML-driven tasks. It’s likely that as agent payments scale, every major payment platform will incorporate an AI-based fraud engine tuned for agents, and every enterprise will have AI-enhanced monitoring for their autonomous processes. This “AI for AI governance” ensures that increased autonomy doesn’t mean increased chaos – instead, it can actually lead to more control and security than legacy systems, because well-designed AI can react faster and more precisely than humans in managing transaction risk.

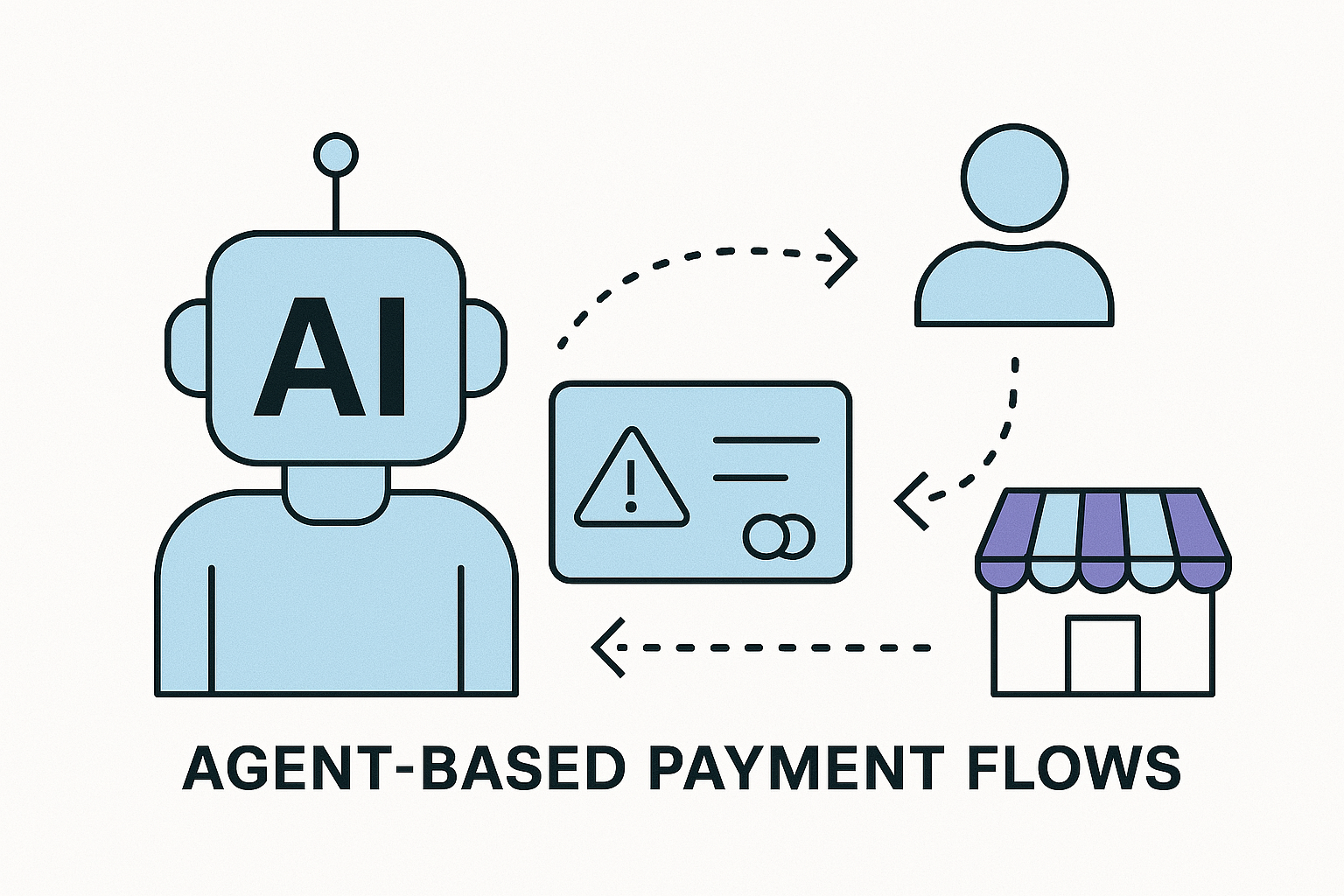

Conceptual Models for Agent-Based Payment Flows

Autonomous agent payments can be orchestrated in various structural ways depending on the use case. These conceptual models describe how an agent might be authorized to spend and how those spending processes are organized. They aren’t mutually exclusive – an agent platform might employ several of these models in combination.

- Pay-Per-Task (Microtransaction Model): In this model, an agent pays for each task or service usage as needed, effectively handling microtransactions or per-action payments. Think of an agent that has to use a third-party API that charges $0.01 per request – instead of the user pre-paying a large amount, the agent could pay those cents on the fly as it calls the API. This model is especially relevant in a future scenario of agent marketplaces: one agent might rent the service of another agent or an API just for one task and pay instantly for that single task. It’s akin to a human buying a single song or single article rather than a subscription. The advent of cryptocurrencies and fast payment systems is what makes pay-per-task viable, because the friction and cost of very small payments is being reduced. If each task can be monetized and settled immediately, agents could offer “hyper-specific, on-demand services at scale,” enabling new business models like pay-per-task or pay-per-insight. For example, an AI writing assistant agent might sell a one-time essay edit to a user’s agent for $0.50, which is paid right after the service is delivered. On the spending side, pay-per-task means the user’s agent must have the capability to continually make small payments: a robust micropayment mechanism (perhaps via a prepaid fund or linked wallet) that doesn’t require separate human approval each time due to volume. This model emphasizes a tight coupling of action and payment – great for accountability (you pay exactly for what was done) and budgeting (costs scale linearly with usage). One challenge is ensuring transaction fees or overhead don’t outweigh the micro amounts; that’s where specialized systems (layer-2 blockchain solutions, agent-specific payment networks, or aggregated billing after micro-auths) might come in. But conceptually, pay-per-task is likely to flourish in agent-to-agent interactions and in IoT contexts. Imagine a future where your smart car pays a few cents to every traffic light’s AI for optimal routing information, or your agent pays another agent a nickel to quickly summarize a news article – millions of such microtransactions could happen behind the scenes, settled by the second. This model offers maximum granularity of control and cost allocation.

- Budget Envelopes (Allowance Model): This model draws from the classic budgeting strategy of envelope budgeting – you allocate certain amounts of money to specific purposes. For agents, this means giving each agent or each category of an agent’s spending a defined budget bucket. For instance, you might allocate $200/month for your household AI to buy groceries and $50/month for it to buy digital services. The agent is aware of these limits and plans its spending accordingly (much like a person with an allowance). Enterprises could do similar: an AI agent managing marketing has a $10k budget for the quarter. The “envelope” can be a virtual wallet or sub-account that the agent draws from. This model ensures that even if the agent optimizes within its domain, it won’t overspend the broader plan. It’s a straightforward way to enforce financial discipline. The agent can also be designed to self-monitor its budget – if halfway through the month it’s spent too much, it might adjust by deferring less critical purchases. It encourages a form of financial reasoning in the agent: treat the budget as a hard constraint and make the best use of it. Technically, implementing budget envelopes can be done via separate accounts (which is clean but requires moving money in/out) or via internal accounting in the agent if using a single account with checks. Many current implementations essentially use this model by giving agents prepaid wallets – that wallet is the envelope, once empty, the agent stops until refilled. Budget envelopes can also be hierarchical: e.g., an agent might have a yearly budget broken into monthly envelopes, and if it saves in one month, it can roll over to the next. Or multiple agents each have envelopes and a higher-level controller (or the user) reallocates between them if needed. This model aligns well with how businesses do departmental budgets or how people handle discretionary spending, so it’s intuitive to manage. It gives users a clear handle on costs: you know the maximum loss or spend from each agent per period. One must be careful to set the envelope appropriately – too small could stifle the agent’s usefulness, too large and you risk more exposure. But since envelopes are adjustable, one can iterate to find the sweet spot, and possibly use AI (as mentioned earlier) to predict and suggest optimal budgets per envelope.

- Event-Driven Spending (Trigger-Based Model): Here, the focus is that payments are initiated not on a continuous basis or by request, but in response to specific events or conditions. An agent operating under this model listens for certain triggers and then unlocks funds or executes payments when those triggers occur. This is akin to setting rules: “If X happens, pay Y.” We have rudimentary versions of this already (like an automatic stock trade “buy at $price” or an IFTTT rule “if my account drops below $100, transfer $50”). With AI agents, these triggers can be far more complex and the actions more involved. For instance, consider an IoT maintenance agent: Event: a sensor detects a machine part failure. Trigger: the maintenance agent verifies the issue and automatically places an order for a replacement part, paying for overnight shipping. The payment only happens when the event (part failure) occurs, and is exactly tied to that event’s resolution. Another example: a personal health AI might have a rule “if pharmacy prices for my medication drop below a threshold then buy a 3-month supply.” The spending is event-driven (price drop event triggers the purchase). This model ensures the agent isn’t spending arbitrarily; it’s responding to external needs or opportunities. It is very useful for just-in-time purchasing and can optimize costs or efficiency (like buying only when needed or when favorable). Implementing event-driven spending requires the agent to be integrated with event streams or monitors. The architecture often involves an event bus or message queue where various systems publish events (inventory low, price high, task completed, etc.), and the agent subscribes to relevant ones. When an event hits, the agent’s logic (or a smart contract) decides if a payment should be made. Smart contracts are actually a natural fit for some of this: you can lock funds in a contract that auto-releases payment when on-chain or oracle-reported events occur (e.g., an insurance payout when a weather oracle reports a hurricane). For agents, not all triggers are so clear-cut, but AI allows recognition of complex events (maybe a pattern of sensor data triggers a maintenance action). Event-driven architecture is seen as key to scaling AI agents, and that extends to payments: by reacting to events, agents can handle financial decisions in a scalable, decoupled way rather than constant human prompts. The challenge is ensuring the triggers are reliable and the agent’s response is appropriate – essentially encoding the business logic for spending decisions. But many domains (like routine restocking, threshold-based actions) are well-suited to this. This model overlaps with pay-per-task sometimes (the event is the task completion that triggers payment), or with budget envelopes (the agent still has an envelope but only spends when events demand).

Subscription / Recurring Model: While not mentioned explicitly in the question, for completeness: an agent might operate under a recurring payment model, where it has authority to pay certain bills or subscriptions on a schedule. This is a subset of event-driven (the event is time passage or billing cycle). For example, an agent managing your subscriptions could automatically pay your monthly Netflix and gym membership. The novelty is the agent could also decide when to cancel or renegotiate these based on your usage (but as long as they are active, it pays them). This is similar to how we use autopay now, but with an AI layer that could optimize – e.g., if a subscription price increases unexpectedly, the agent might alert you or decide to cancel if it knows you barely use it. I mention this because a lot of B2C agent use might start here: managing recurring expenses. It’s low-hanging fruit for automation (already partially automated by merchants, but an agent could consolidate and oversee it in the user’s interest).

Performance-Based or Outcome-Based Payments: In some scenarios, an agent might only pay upon successful completion of something (this shades into smart contracts and multi-party workflows). For example, an agent hires a freelance AI (say, an image generator for a project) and escrows the payment, releasing it only when the image meets certain criteria. This is like a mini contract enforcement: the payment is contingent on an outcome. Agents could handle this by evaluating the outcome themselves (AI judge) or by predefined checks. It’s a more complex model but aligns with how businesses want to pay for results, not efforts.

Bringing it together, these conceptual models aren’t exclusive. A comprehensive agent system might allocate budgets (envelopes), allow pay-per-task within those budgets, and have certain event-driven triggers for larger actions, all while using AI to adjust things dynamically. For example: you give your home AI a monthly budget (envelope). Within that, it has freedom to pay-per-task for routine things (buy groceries when needed, pay per song it plays from a music service, etc.). Additionally, you set an event-driven rule: if an appliance breaks (event), it can spend up to a certain amount to get a replacement part or call a repair service, otherwise it should wait for your approval. This illustrates a multi-model approach.

Importantly, these models mirror common human financial patterns (piecemeal spending, budgets, triggers like bill due dates). By structuring agent payments in familiar ways, it’s easier to slot them into everyday life and business and easier for users to understand and trust them.

The pay-per-task model highlights new possibilities unlocked by autonomous systems – an economy of microtransactions that wasn’t feasible at human scale. The budget envelope model emphasizes safety and control by partitioning funds. The event-driven model emphasizes reactivity and efficiency – money moves only when needed. In designing agent payment capabilities, thinking in terms of these patterns helps ensure we cover the necessary use cases and constraints.

Short-Term Solutions vs. Long-Term Innovations

The path to fully autonomous agent payments will likely progress in stages. Short-term solutions will leverage existing technology with minimal changes, allowing us to safely test and gain experience. Long-term innovations may overhaul how payments work or introduce entirely new paradigms as technology and regulations catch up. Here we compare what can be done now (or very soon) versus what we envision in the future:

Short-Term Feasible Solutions: